Can Vibe Coding Handle Your Organization's Data?

Can vibe coding manage your organization's data?

Vibe coding has established itself in 2026 as a common practice across development teams. The question that CIOs, CDOs, and data leaders are now asking is legitimate: if AI can generate a data cleaning script in thirty seconds, what is the point of a dedicated platform?

This article answers that question objectively — distinguishing what vibe coding genuinely does well from the domains where it structurally falls short on organizational data.

What Is Vibe Coding in 2026?

The term was coined by Andrej Karpathy, co-founder of OpenAI, in February 2025. It describes a development approach where you describe your intent in natural language and let AI generate the code — without necessarily reading it line by line.

Collins Dictionary named it Word of the Year 2025. Google Trends records a progression of +6,700% in searches since January 2025. Today, 92% of US developers use AI tools daily, and 46% of all new code worldwide is AI-generated (Second Talent, 2026).

In February 2026, Karpathy himself declared the concept "passé" — in favor of a more structured engineering approach where humans supervise and validate. Despite this, the practice continues to spread inside organizations, often outside any governance framework.

Applied to data, vibe coding translates into instructions like: "Generate a script to clean my customer database, remove duplicates, and normalize postal codes." The result arrives in thirty seconds. It works in test. The problems appear in production.

Where Vibe Coding Delivers Real Value?

Vibe coding presents real advantages in specific contexts. It would be inaccurate to dismiss it entirely.

Data exploration is its natural terrain: quickly analyzing the structure of a new dataset, identifying patterns, generating a one-off report on a limited volume. For these use cases, the speed it provides is a concrete advantage.

Business rule prototyping is another valid use case: testing deduplication logic on a sample, validating an approach before formalizing it in a production pipeline.

Short-lived projects — internal scripts with no regulatory stakes, one-off automations on non-critical data — also fall within appropriate scope.

Outside these contexts, the structural limitations of vibe coding become operational risks.

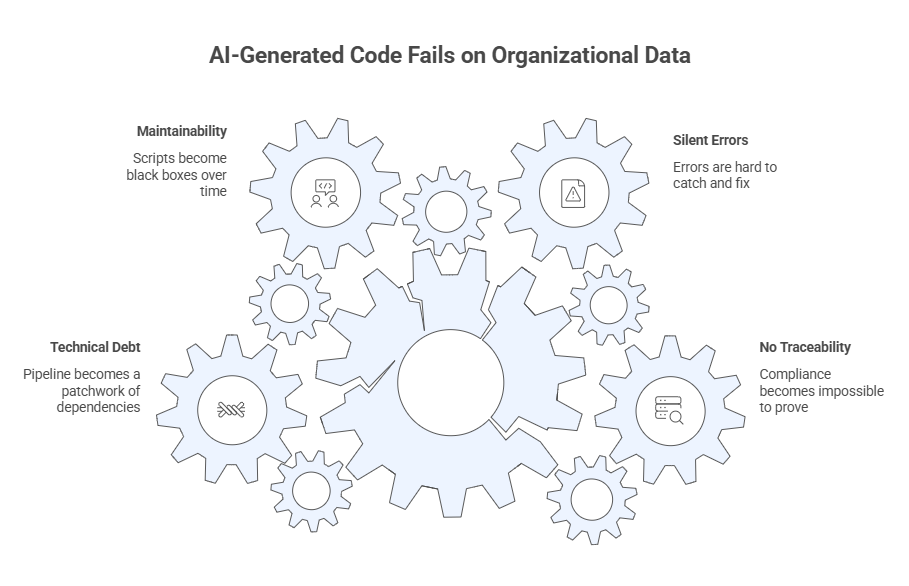

Why Vibe Coding Fails on Production Data?

No Traceability: GDPR Compliance Becomes Impossible to Prove

GDPR, BCBS 239, NIS2, and the Cyber Resilience Act all require complete traceability of data processing operations. An AI-generated script does not document its transformations. It produces no lineage. It cannot demonstrate, in an audit, that personal data was processed in accordance with legal obligations.

Legal accountability for those processing operations remains entirely with the organization. Regulators do not recognize "automatically generated by AI" as a compliance proof.

Silent Errors That Are Hard to Detect at Scale

A language model optimizes for syntactically correct code — not for code that is accurate against your data, your business rules, your production environment.

A deduplication rule can be statistically plausible and operationally wrong. A normalization logic can work on 1,000 rows and silently corrupt millions of records over weeks before detection.

The data confirms it: 45% of AI-generated code contains exploitable security vulnerabilities (Georgetown CSET). AI-generated code produces 1.7x more major issues than human-written code at review (CodeRabbit, 2025). These statistics carry different weight when the subject is financial, customer, or HR data.

Maintainability Tied to the Script's Author

Over 40% of developers admit to deploying AI-generated code they do not fully understand (Deloitte, 2025). When the person who wrote that code changes roles or leaves the organization, the scripts become black boxes. No one can modify them safely. No one can explain them to an auditor.

In organizations where data teams evolve regularly, this pattern generates technical debt that accumulates invisibly — until an incident forces a full rebuild.

Technical Debt That Builds Without Warning

Each new business rule requires a new script. Each script adds a layer of dependency. Six months later, the pipeline is a patchwork that no one fully understands.

Google's DORA research measures the impact: as AI adoption in code increases without associated governance, delivery stability drops by an average of 7.2%. GitClear analyzed 211 million lines of code: the refactoring rate fell from 25% of code changes in 2021 to under 10% in 2024.

There is also an often-overlooked cost: the cost of iteration. When a vibe-coded script produces incorrect results in production — which it will — each cycle of debugging, regenerating, and correcting consumes time, compute resources, and API calls that accumulate in ways that are difficult to budget for. On complex data pipelines, these cycles can be numerous.

For an organization managing dozens of source systems and millions of records, this debt translates concretely into BI projects producing inconsistent results and AI models that hallucinate due to unreliable input data.

Vibe Coding or Data Platform: How to Decide?

|

Situation |

Vibe Coding |

Structured Platform |

|

Exploring a new dataset |

✅ Appropriate |

Overkill |

|

Prototype < 10,000 rows, no regulatory stakes |

✅ Legitimate |

Overkill |

|

Production pipeline on customer or financial data |

❌ Structural risk |

✅ Built for this |

|

Personal data subject to GDPR |

❌ No traceability |

✅ Native lineage |

|

Compliance audit to produce |

❌ Cannot be reconstructed |

✅ Exportable in a few clicks |

|

Business rules maintained by an evolving team |

❌ Author dependency |

✅ Pipelines readable by all |

|

Feeding AI models in production |

❌ Non-certified data |

✅ AI-ready data |

|

IT / business collaboration on data processes |

❌ Inevitable silos |

✅ Collaborative platform |

|

Iteration and maintenance cost |

❌ API calls, re-generations, unpredictable debugging |

✅ Predictable cost, stable pipelines |

The boundary is not technical. It is organizational and regulatory: wherever data engages the organization's legal responsibility, vibe coding is not the appropriate tool.

What a Data Intelligence Platform Like Tale of Data Provides?

Tale of Data is an AI-powered No-Code Data Intelligence platform. It covers the full data lifecycle through five core capabilities.

A catalog view and Mass Data Discovery module to access all datasets and reference tables, and automatically map the data landscape — databases, files, cloud platforms like Snowflake or Databricks. The MDD module surfaces the type, nature, statistics, and quality score of each column across every dataset. Without this visibility, every new data project starts from scratch.

A visual No-Code Flow Designer to build processing pipelines — joins, deduplication, normalization, enrichment, aggregation — without writing a single line of code. Every flow reads like a document. Any team member can understand it, modify it, and schedule it. When a colleague leaves, the work remains readable and maintainable.

A quality engine and Data Observability module that applies fixed, reproducible rules across the entire data estate. The MDD module continuously monitors data freshness, volume, quality, and schema, and triggers configurable real-time alerts. The same data produces the same results on every execution.

Built-in dashboards constructed by drag and drop directly within the platform, to visualize and monitor data health without an external tool.

Native Data Lineage accessible from the catalog, flows, and dashboards. Every transformation is traceable upstream and downstream. GDPR compliance proof lives in the platform — not in the inbox of a team member who left.

Real-World Case: Manutan and the Industrialization of Data Quality

Manutan is Europe's largest supplier of products to businesses: over one million product references, 17 countries, 28 subsidiaries. The quality of their product catalog data directly impacts operations — insufficient completeness on a product attribute generates customer returns, disputes, and margin losses.

Manutan's challenge was not to write a script. It was to industrialize repeatable, collaborative, maintainable data quality processes — across a catalog of over one million references in 17 countries, managed by teams that evolve over time.

Before Tale of Data, every data cleaning or reconciliation use case was handled through manually written Python scripts. Setup took a full week. Transferring knowledge between team members was complex. And every new use case started from scratch.

"From two different data sources, we set up a workflow and addressed our use case in two hours, whereas it previously took a week using Python scripts. We rolled out our use cases according to several important criteria for us with different solutions, and Tale of Data was the one that stood out."

Mbery Ngom, Data Quality Analyst, Manutan

The speed gain is real. But it is not the only point. That two-hour workflow is today readable, modifiable, and transferable by any team member — without depending on its original author, without re-explanation, without risk of regression. That is what a script — AI-generated or not — cannot guarantee.

Manutan selected Tale of Data following a competitive RFP, citing the industrialization quality, the collaborative features, and the ability to combine multiple data sources easily.

Conclusion: Can Vibe Coding Handle Your Organization's Data?

Vibe coding addresses a genuine need for speed in the early stages of a data project. But speed of generation does not replace the governance, traceability, and maintainability that organizational data requires in production.

Organizations that confuse the two find themselves, a few months later, with pipelines no one understands, audits impossible to produce, and AI models that fail due to unreliable input data.

Tale of Data combines the speed of a No-Code platform with the rigor of a deterministic quality engine, a living Data Catalog, and native traceability — so organizations can industrialize their data without sacrificing compliance or accumulating invisible technical debt.

→ Measure the cost of poor data quality with the Tale of Data ROI Calculator

FAQ: Data Quality Tool vs Vibe Coding

Vibe coding is well-suited for exploration and prototyping on limited volumes. It is not designed to manage production data in a regulated environment: it produces no traceability, does not document transformations, and cannot guarantee reproducibility of results at scale.

45% of AI-generated code contains exploitable security vulnerabilities (Georgetown CSET, 2025). On personal, financial, or HR data, these flaws can go undetected for weeks. Without automatic lineage or documentation, it is impossible to prove processing compliance during a GDPR audit.

No. An ETL handles industrial volumes, produces complete lineage, and maintains reproducible rules over time. Vibe coding generates functional code in the moment, with no guarantee of maintainability or traceability. The two tools address fundamentally different needs.

GDPR compliance requires that every data processing operation be documented, traceable, and auditable. This means complete lineage, automatic detection of personal and sensitive data, and formalized validation workflows. These elements must be native to the tool being used — not reconstructed after the fact.

Technical debt refers to the accumulation of undocumented, unmaintained scripts that are incomprehensible to anyone other than their original author. Google's DORA research shows that AI adoption in code without associated governance reduces delivery stability by an average of 7.2%. On critical data pipelines, this instability translates into inconsistent reports and degraded AI models.

Yes. Vibe coding can prototype a rule or explore a data source. A Data Quality platform industrializes, documents, and governs what goes into production. The two approaches are complementary — as long as they are not confused with each other.

You May Also Like

These Related Stories

Data Lineage: understanding the importance and benefits of data traceability

10 Best Data Quality Tools in 2026 — Ranked & Compared